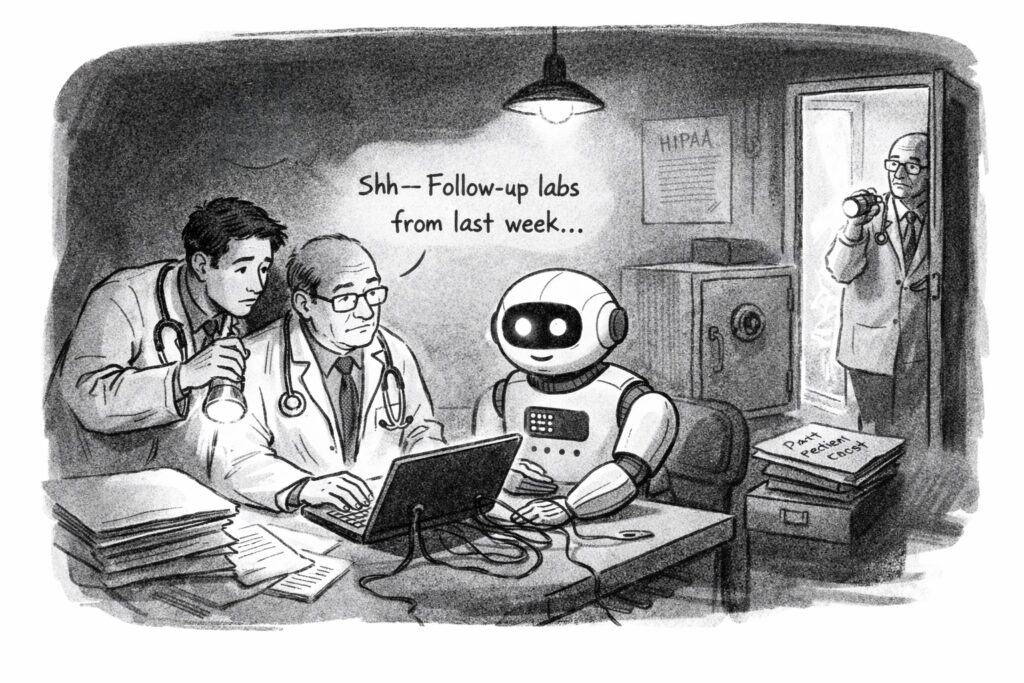

Trainee: “I cut and pasted the clinical note from the EHR into an AI tool to get a second opinion on management.”

Me: “Were you worried about HIPAA, or about how the AI company might use the data?”

Trainee: “Maybe. But I saw the senior resident in the ED do the same.”

Our institutional reluctance to decide, openly and practically, how AI should be used in patient care is already eroding something important in medical training. We are forcing students, residents, and young physicians into a new kind of double think: publicly honoring one set of rules while privately relying on another set of practices to get through the day and care for patients well.

Medicine has long contained smaller forms of this tension. I think of tests I learned to order not because they were likely to change management, but because the risk of not ordering them felt legally unsafe. Faced with a patient, I might describe that as “covering the bases,” even when I knew the test was unlikely to be useful and might even lead to false positives, extra cost, and unnecessary follow-up. Medicine has never been free of these mismatches between official rationale and lived practice. AI is making them larger, more frequent, and harder to ignore.

I first saw this clearly in early 2023. ChatGPT had only recently entered public awareness, and physicians almost immediately found clever ways to use it for tedious administrative work. One example was feeding it the contents of a patient note and asking it to draft an appeal to an insurer for a referral or procedure. What had taken many minutes could suddenly be done in seconds.

The ingenuity was impressive. The compliance problem was obvious.

In many settings, pasting identifiable patient information into publicly available AI systems could run afoul of privacy rules and institutional policies, especially when no business associate agreement or equivalent contractual protection was in place. Yet that did not stop people. The tools were simply too useful. Today, some health systems do have contractual arrangements with leading AI vendors that provide stronger protections and limit how submitted data may be retained or used. But those protections still do not apply to many of the models that clinicians can easily access on their own.

Over the past year, in conversations with medical students and trainees around the country, I have heard the same pattern again and again. The AI tools available inside approved hospital environments are often weaker, harder to use, or less helpful than the best systems available to the general public. So trainees improvise. They compare models. They exchange tips. They gravitate toward whatever seems most capable of helping them think through a diagnostic problem, frame a management plan, or communicate more effectively.

They are not doing this because they are naïve. Quite the opposite. Most are well aware that AI systems can hallucinate, omit, and mislead. But they also know that these tools can jog memory, widen a differential, reframe a problem, and help them express a plan more clearly. When I ask how they justify the regulatory risk, the answer is usually some version of one of two things: they learned the behavior from those slightly ahead of them, or they believe that, in the moment, the benefit to the patient outweighs the institutional rule they are bending.

That is not a healthy equilibrium. It is ethically unstable, legally exposed, and educationally corrosive. A recent NEJM AI editorial captured this tension well: clinicians are making pragmatic tradeoffs in the face of real need, but they are doing so in a vacuum of institutional clarity.

So what should healthcare institutions do?

One response is restrictive. Hospitals can limit AI use to tools vetted by the institution or bundled by the EHR vendor, and treat outside use as a serious compliance violation even when clinicians access those tools through personal accounts. That approach has the appeal of clarity. But it is unlikely to work for long if the permitted tools are materially worse than what is available elsewhere. Trainees will not stop comparing quality simply because leadership wishes they would.

The better response is forward-looking. Institutions should acknowledge three realities at once: these tools are already clinically influential; their capabilities will change rapidly; and no single company is likely to remain best indefinitely. On that basis, hospitals and medical schools should make safe AI use part of formal clinical apprenticeship. They should teach where AI helps, where it fails, what kinds of patient data can and cannot be used in which settings, how outputs should be checked, and how responsibility remains with the clinician. At the same time, healthcare leaders should negotiate flexible privacy-preserving agreements with multiple vendors so that clinicians can use high-performing tools lawfully, compare them directly, and develop informed judgment about their strengths and weaknesses.

If enough healthcare institutions demand that kind of access, more AI vendors will create the contractual and technical mechanisms needed to support it.

The restrictive path will not just be frustrating. It will be demoralizing. Years ago, I wrote about how clunky and antiquated much of our EHR infrastructure felt compared with the tools available to ordinary teenagers outside medicine. That gap was not trivial; it contributed to burnout. We now risk repeating the same mistake with AI, but on a larger scale.

If we force clinicians to choose between following outdated institutional constraints and using the best available tools to help patients, many will choose the latter, quietly. That silence is the real danger. Healthcare institutions should not train the next generation to hide their use of AI. They should train them to use it well, lawfully, critically, and in the open.